Computable Assessment in Practice: OpenStax Assignable + Quezzio

OpenStax has established a large-scale foundation for open educational content, delivering structured course materials used by instructors and students across a wide range of subjects and institutions. With the introduction of its teaching and learning platform, OpenStax Assignable, the focus expanded from content delivery to supporting large-scale engagement and assessment within the same environment. In mathematics in particular, this requires generating and evaluating large volumes of problems while accommodating equivalent expressions, multiple solution paths and varying instructional approaches.

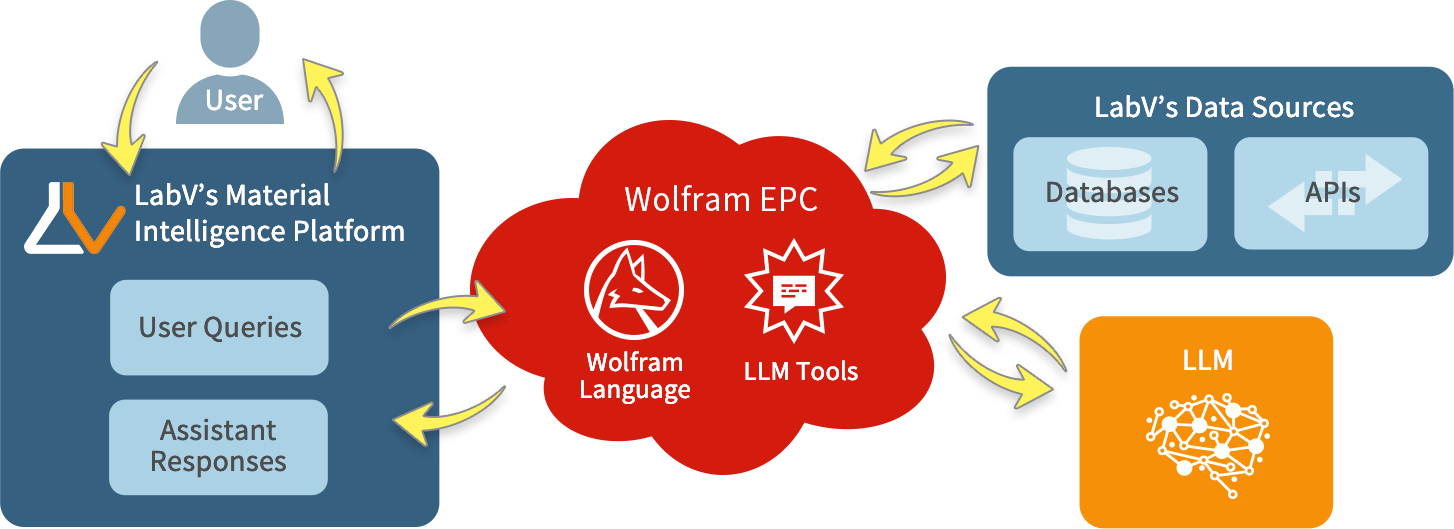

Wolfram Consulting worked with OpenStax to extend these capabilities by transforming textbook exercises into algorithmically generated questions using its existing Quezzio assessment system. The team adapted Quezzio’s generation and symbolic evaluation capabilities to produce new problem instances while preserving mathematical structure. This established a pipeline for extending fixed question sets into scalable, dynamic assessments within the Assignable platform.

Building Algorithmic Question Generators

The starting point for each question was the existing OpenStax course material, which was converted into programmatic templates within the Quezzio assessment system. Beyond defining a single static exercise, each template encodes the mathematical structure of a problem along with parameter ranges and constraints.

These templates were then refined through a rigorous verification process to ensure instructional quality and mathematical correctness. This included:

- Defining grading rules that reflect the intended form of an answer

- Validating parameter ranges to avoid degenerate or misleading cases

- Ensuring all generated instances are solvable and pedagogically appropriate

- Typesetting mathematical expressions for clarity

- Generating graphics that match problem parameters, with correct proportions and labeling

- Aligning with educational accessibility best practices

This process established a reliable pipeline for generating assessment content at scale. The result is a system that produces mathematically valid, well-formed problems with consistent grading behavior, ready for integration into an instructional platform.

Integrating the Generators into Assignable

Quezzio’s question generators and symbolic evaluation capabilities are integrated directly into the Assignable platform through a combination of API connections and LTI integration. This allows Quezzio to operate within existing learning management system (LMS) workflows, receiving student input, evaluating responses and returning results without requiring a separate interface or external tool.

As a result, the generators are not theoretical—they run inside the actual learning environment. Students encounter dynamically generated problems as part of their coursework, while Quezzio handles generation, evaluation and feedback behind the scenes within the platform.

What Students Experience

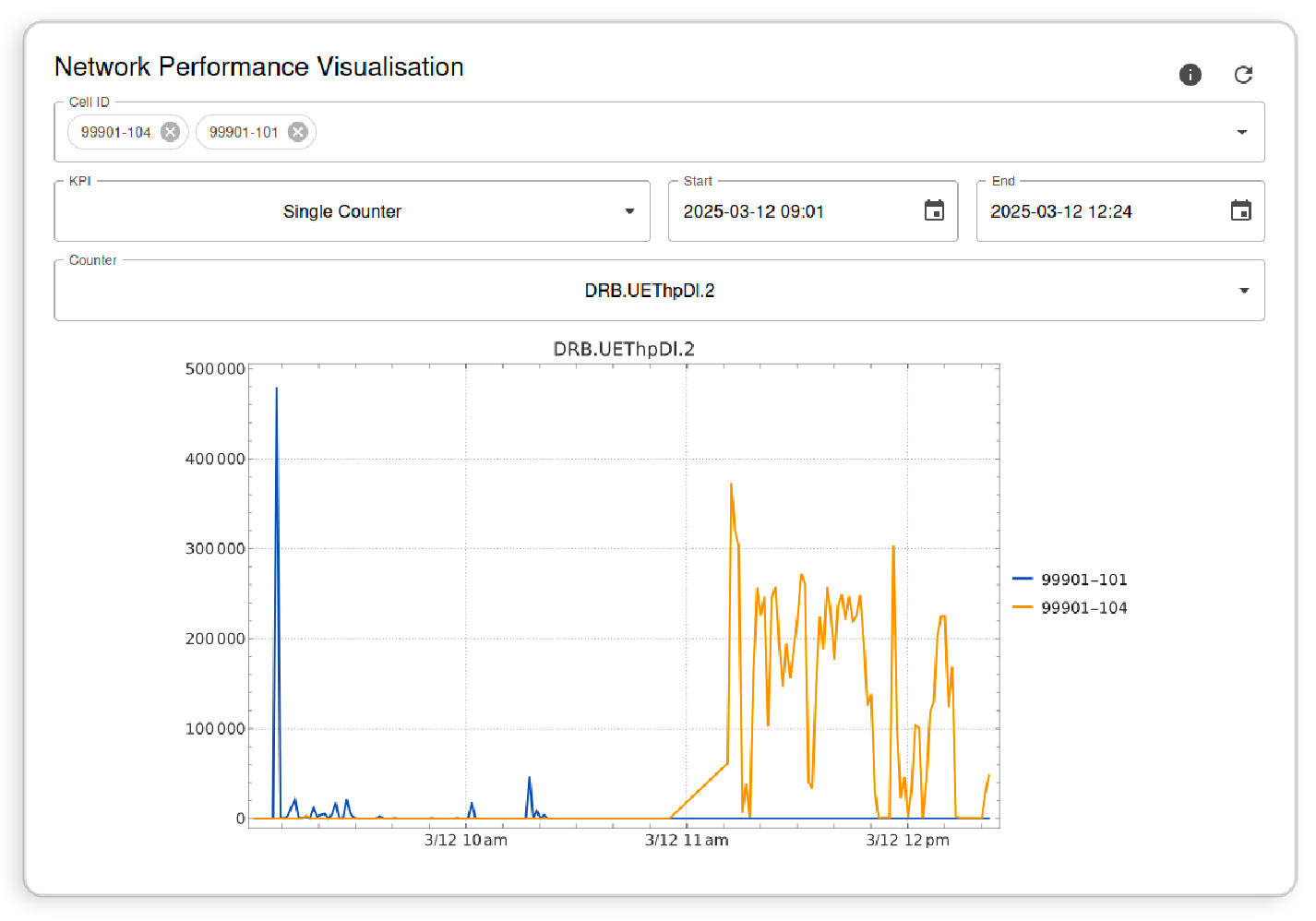

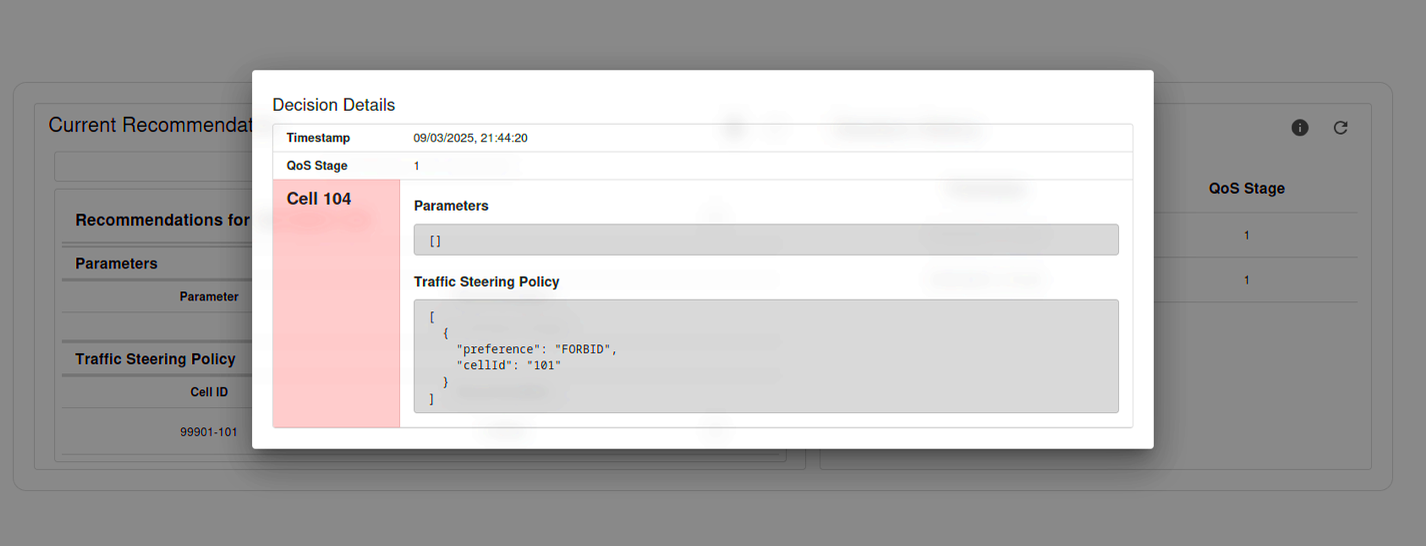

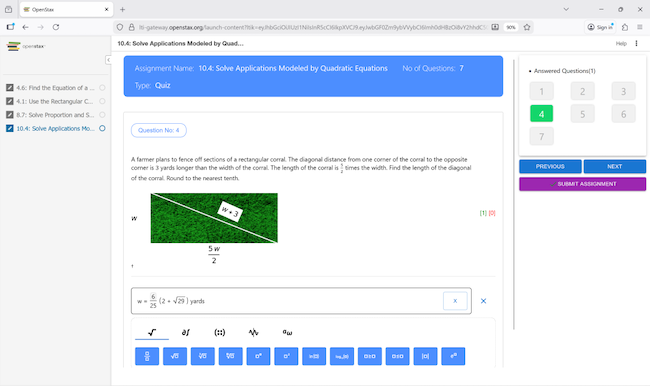

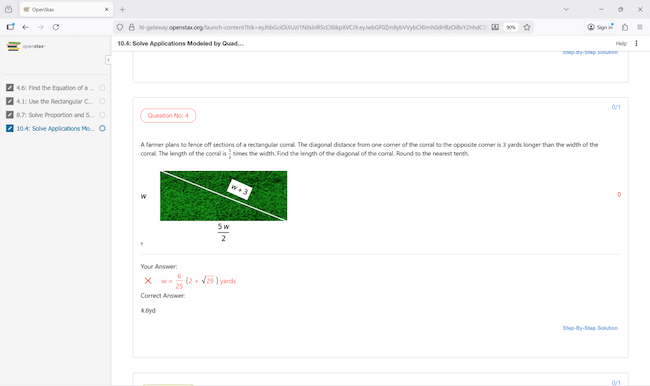

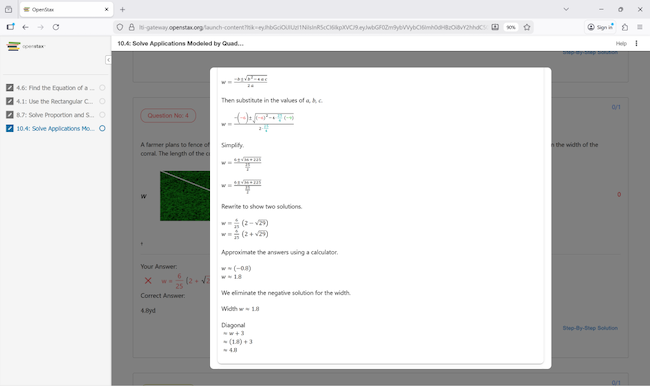

Within Elementary Algebra 2e in OpenStax Assignable, students encounter problems generated by the Quezzio system as part of their regular coursework. Each student receives a unique version of a problem based on the same underlying template, providing individualized practice while preserving the intended mathematical structure. Beyond multiple-choice formats or fixed answers, students enter expressions directly, and those responses are evaluated symbolically.

Quezzio evaluates whether a student’s input is mathematically equivalent and structurally valid, allowing multiple correct forms of an answer while enforcing the intended solution method. When a response is incorrect, step-by-step solutions are generated from the symbolic evaluation of that specific problem instance, showing where reasoning diverged. The system can also interpret answers with units in multiple valid forms—for example, recognizing inputs such as “$5,” “5 dollars,” “5 USD” or “500 cents” as equivalent when appropriate.

The following images show how this operates in practice within the course environment, from problem generation through evaluation and feedback.

|

|

|

Data-Driven Question Generation

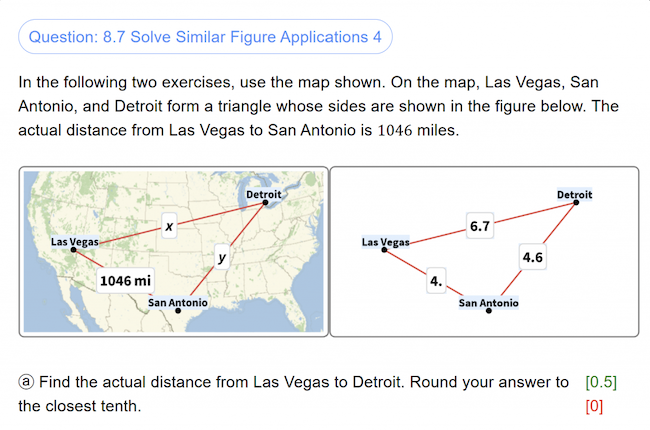

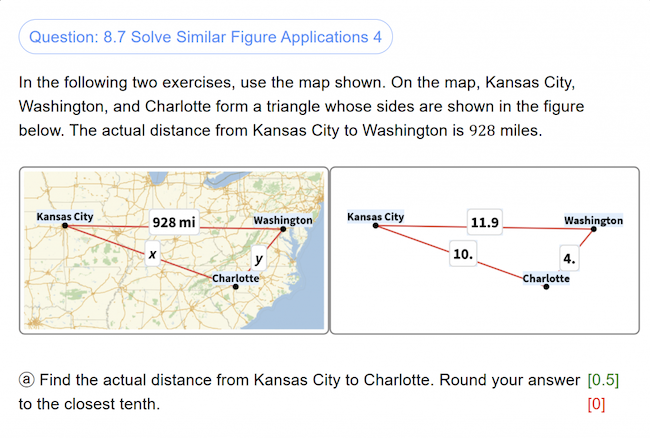

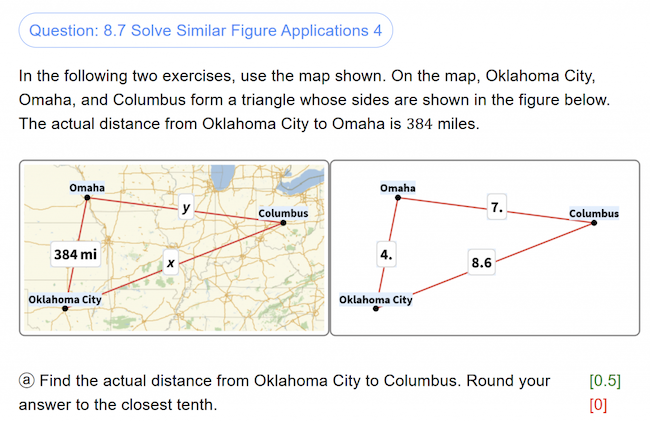

The same Quezzio infrastructure also supports data-driven question generation using Wolfram’s knowledgebase. In some problems, parameters are derived from real-world data, such as geographic locations or measured quantities.

For example, a question may select cities based on population data, compute distances between them and generate a corresponding to-scale map, ensuring that each instance is both mathematically valid and grounded in accurate data.

|

|

|

This approach extends beyond individual problems to broader assessment design. Question generators can be adapted to reflect different teaching styles, align with local or regional curricula and scale across courses and institutions. Rather than rely on fixed question banks, institutions can generate assessments that match their own instructional goals while maintaining consistency, accuracy and flexibility.

Extending This Approach to Other Learning Systems

The same process used to build computable assessments for OpenStax can be applied to other course materials and instructional environments. Many institutions rely on static question banks that are difficult to scale, adapt or align with specific teaching approaches. Converting those materials into algorithmic generators makes it possible to produce large volumes of consistent, flexible assessment content while preserving the structure and intent of the original curriculum.

This approach allows institutions to generate assessments tailored to their own instructional goals rather than rely on generic content. Question sets can be adapted to reflect different teaching styles, align with regional or institutional curricula and scale across courses and programs. Existing content can be converted into dynamic formats that support randomization, symbolic evaluation and automated grading without sacrificing accuracy or control.

Wolfram works with organizations like OpenStax to transform existing content into computable assessment systems, integrating them into existing platforms and workflows to support scalable, curriculum-aligned instruction.

Wolfram can help you convert your existing content into scalable, computable assessment.