Computable Assessment in Practice: OpenStax Assignable + Quezzio

Client Results (12)News (2)

OpenStax has established a large-scale foundation for open educational content, delivering structured course materials used by instructors and students across a wide range of subjects and institutions. With the introduction of its teaching and learning platform, OpenStax Assignable, the focus expanded from content delivery to supporting large-scale engagement and assessment within the same environment. In mathematics in particular, this requires generating and evaluating large volumes of problems while accommodating equivalent expressions, multiple solution paths and varying instructional approaches.

Wolfram Consulting worked with OpenStax to extend these capabilities by transforming textbook exercises into algorithmically generated questions using its existing Quezzio assessment system. The team adapted Quezzio’s generation and symbolic evaluation capabilities to produce new problem instances while preserving mathematical structure. This established a pipeline for extending fixed question sets into scalable, dynamic assessments within the Assignable platform.

Building Algorithmic Question Generators

The starting point for each question was the existing OpenStax course material, which was converted into programmatic templates within the Quezzio assessment system. Beyond defining a single static exercise, each template encodes the mathematical structure of a problem along with parameter ranges and constraints.

These templates were then refined through a rigorous verification process to ensure instructional quality and mathematical correctness. This included:

- Defining grading rules that reflect the intended form of an answer

- Validating parameter ranges to avoid degenerate or misleading cases

- Ensuring all generated instances are solvable and pedagogically appropriate

- Typesetting mathematical expressions for clarity

- Generating graphics that match problem parameters, with correct proportions and labeling

- Aligning with educational accessibility best practices

This process established a reliable pipeline for generating assessment content at scale. The result is a system that produces mathematically valid, well-formed problems with consistent grading behavior, ready for integration into an instructional platform.

Integrating the Generators into Assignable

Quezzio’s question generators and symbolic evaluation capabilities are integrated directly into the Assignable platform through a combination of API connections and LTI integration. This allows Quezzio to operate within existing learning management system (LMS) workflows, receiving student input, evaluating responses and returning results without requiring a separate interface or external tool.

As a result, the generators are not theoretical—they run inside the actual learning environment. Students encounter dynamically generated problems as part of their coursework, while Quezzio handles generation, evaluation and feedback behind the scenes within the platform.

What Students Experience

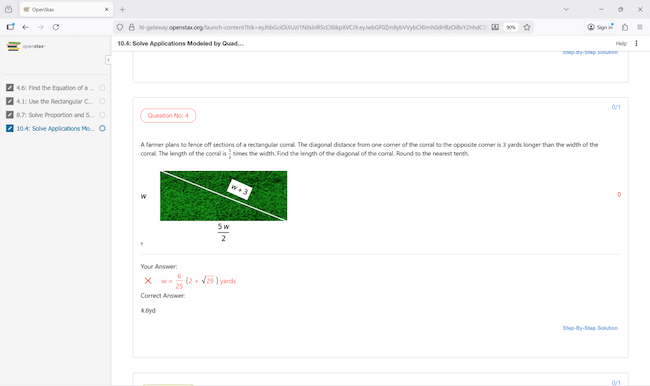

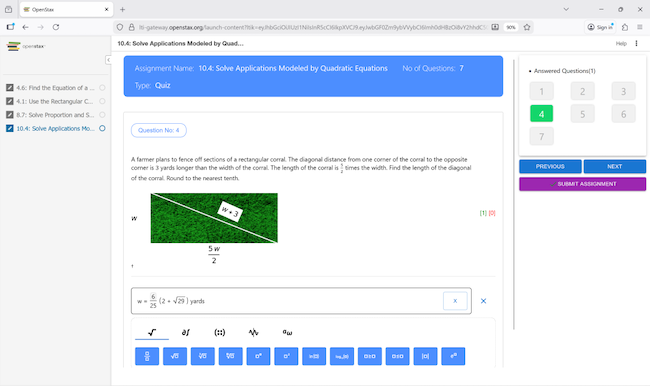

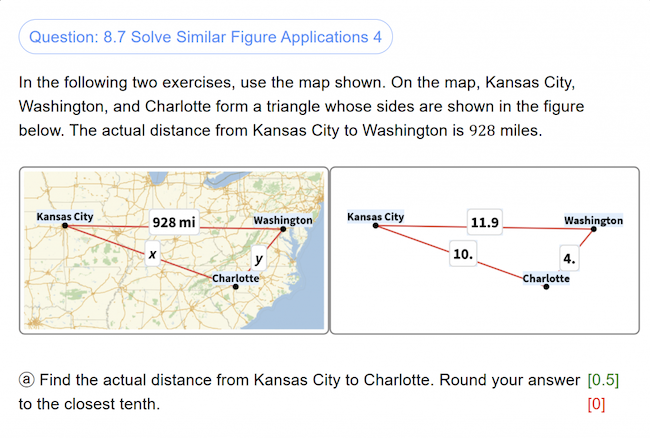

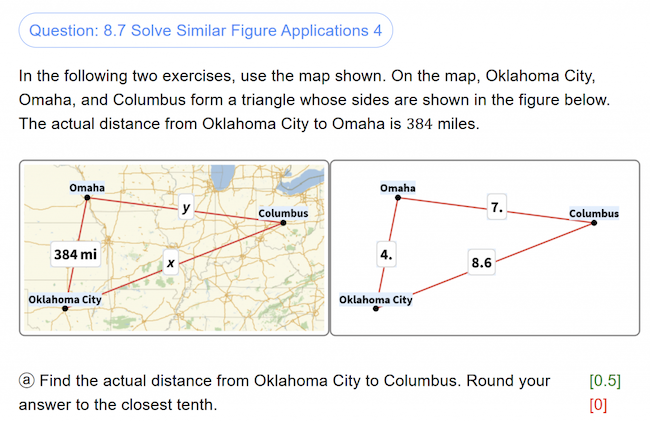

Within Elementary Algebra 2e in OpenStax Assignable, students encounter problems generated by the Quezzio system as part of their regular coursework. Each student receives a unique version of a problem based on the same underlying template, providing individualized practice while preserving the intended mathematical structure. Beyond multiple-choice formats or fixed answers, students enter expressions directly, and those responses are evaluated symbolically.

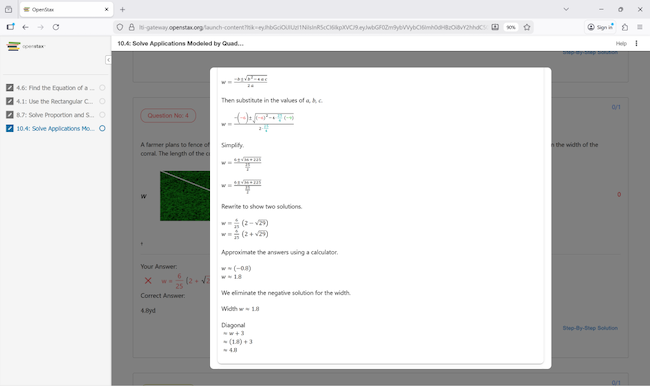

Quezzio evaluates whether a student’s input is mathematically equivalent and structurally valid, allowing multiple correct forms of an answer while enforcing the intended solution method. When a response is incorrect, step-by-step solutions are generated from the symbolic evaluation of that specific problem instance, showing where reasoning diverged. The system can also interpret answers with units in multiple valid forms—for example, recognizing inputs such as “$5,” “5 dollars,” “5 USD” or “500 cents” as equivalent when appropriate.

The following images show how this operates in practice within the course environment, from problem generation through evaluation and feedback.

|

|

|

Data-Driven Question Generation

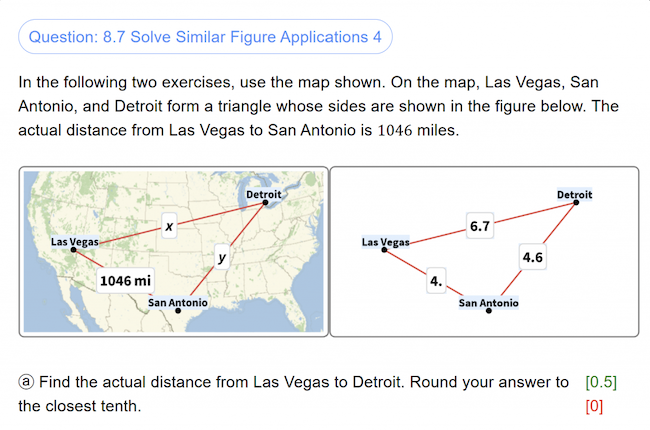

The same Quezzio infrastructure also supports data-driven question generation using Wolfram’s knowledgebase. In some problems, parameters are derived from real-world data, such as geographic locations or measured quantities.

For example, a question may select cities based on population data, compute distances between them and generate a corresponding to-scale map, ensuring that each instance is both mathematically valid and grounded in accurate data.

|

|

|

This approach extends beyond individual problems to broader assessment design. Question generators can be adapted to reflect different teaching styles, align with local or regional curricula and scale across courses and institutions. Rather than rely on fixed question banks, institutions can generate assessments that match their own instructional goals while maintaining consistency, accuracy and flexibility.

Extending This Approach to Other Learning Systems

The same process used to build computable assessments for OpenStax can be applied to other course materials and instructional environments. Many institutions rely on static question banks that are difficult to scale, adapt or align with specific teaching approaches. Converting those materials into algorithmic generators makes it possible to produce large volumes of consistent, flexible assessment content while preserving the structure and intent of the original curriculum.

This approach allows institutions to generate assessments tailored to their own instructional goals rather than rely on generic content. Question sets can be adapted to reflect different teaching styles, align with regional or institutional curricula and scale across courses and programs. Existing content can be converted into dynamic formats that support randomization, symbolic evaluation and automated grading without sacrificing accuracy or control.

Wolfram works with organizations like OpenStax to transform existing content into computable assessment systems, integrating them into existing platforms and workflows to support scalable, curriculum-aligned instruction.

Wolfram can help you convert your existing content into scalable, computable assessment.

Advancing Electric Truck Simulation with Wolfram Tools: Wolfram Consulting Group

Client Results (12)

Heavy-duty electric trucks don’t have the luxury of wasted heat. Every watt must either be stored or redirected, which makes thermal control one of the hardest parts of vehicle design. For a global manufacturer of commercial freight vehicles developing its next electric platform, simulation speed became the barrier. Each test cycle ran for hours, slowing every decision about how to warm the cabin and protect the batteries.

Wolfram Consulting replaced that process with a unified computational model and a neural network able to predict and correct thermal behavior in real time. What once ran overnight now runs in seconds.

The Challenge

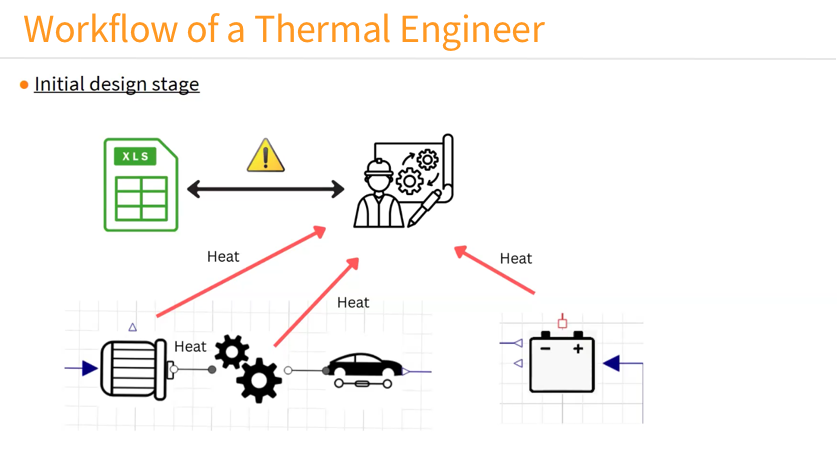

Designing the thermal system of a heavy-duty electric truck means balancing efficiency with durability and driver comfort. The job demands precise control of temperature across batteries, motors and cabin spaces, while keeping energy use as low as possible.

At the start of development, separate engineering groups produced their own heat data for a four-hour reference route between company facilities. The battery team modeled pack heating, the driveline group estimated motor losses, and the cabin group focused on air-handling loads. The thermal group then merged these datasets by hand in spreadsheets to approximate whole-system behavior.

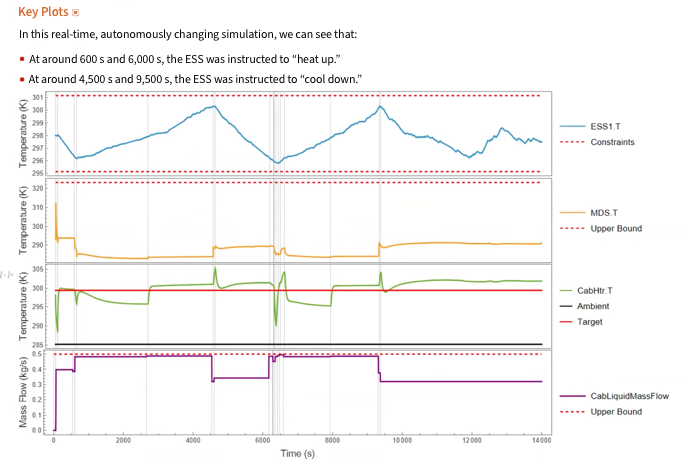

Detailed GT-Suite runs that took hours were reproduced in tens of seconds in System Modeler. For closed-loop control experiments that adjust valves/pumps on the fly, the team identified a real-time simulation constraint that required running at 1×, which is why those validation runs were executed overnight.

The Solution

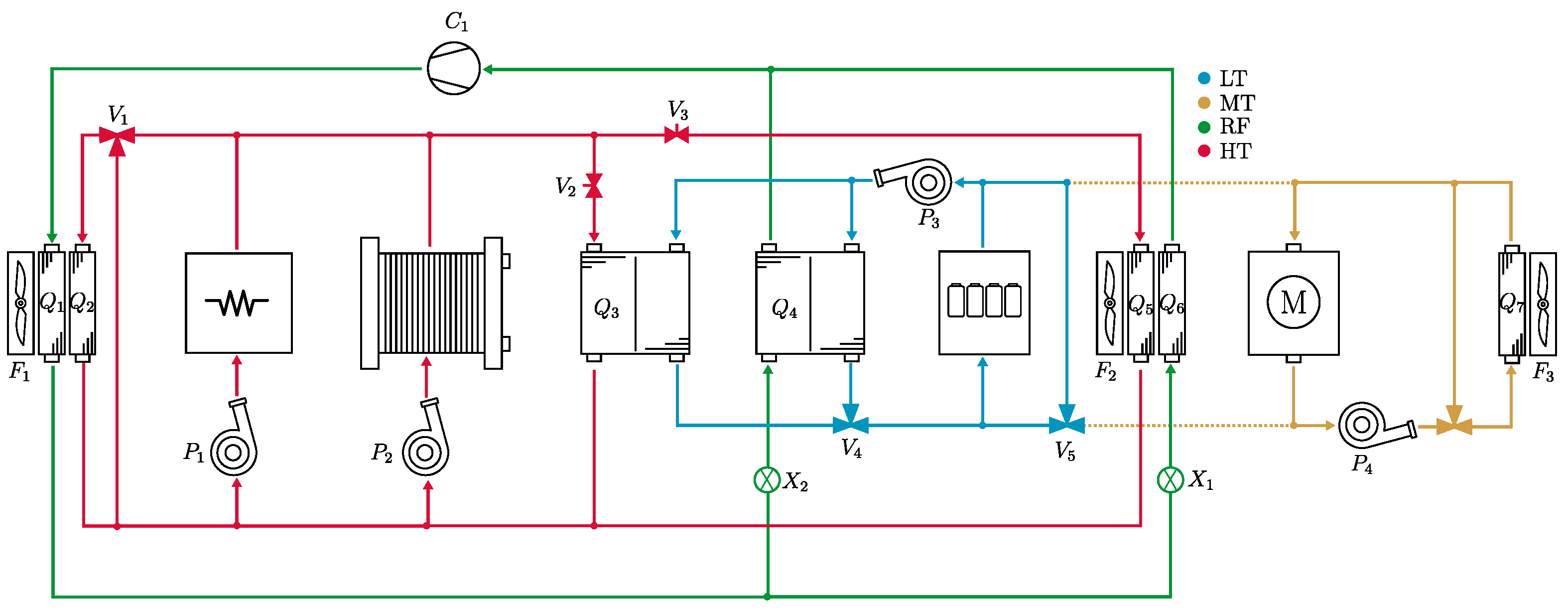

Wolfram Consulting delivered a two-step solution: first, a high-fidelity System Modeler framework that replaced manual thermal analysis, and second, a neural network trained on those simulations to predict and optimize real-time performance.

The team replaced static spreadsheets with a computable model of the truck’s thermal circuit, similar to the one shown here. Engineers built a two-tier System Modeler library for early exploration and for detailed dynamics. The first tier used simplified components with basic mass-flow inputs, so engineers could visualize and test circuit concepts before detailed data existed. The second tier added hydraulic flow and pressure behavior, including pipe diameters, pump curves and coolant properties that vary with temperature and pressure.

These models produced results consistent with GT-Suite in a fraction of the time. A full run of the same route that once took three to four hours could be completed in about 35 seconds. The library enabled rapid iteration and reuse across projects, giving the thermal group a practical foundation that matched operating conditions.

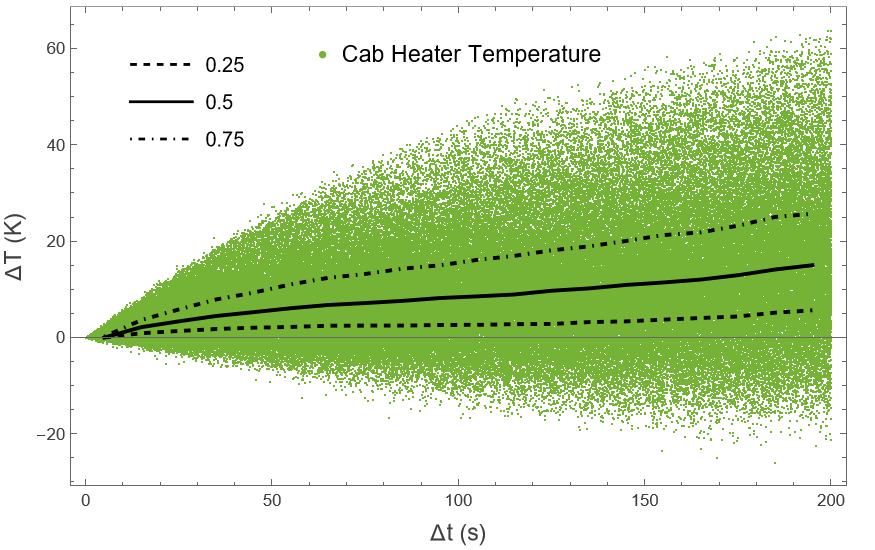

Once validated, the team generated steady-state simulations spanning valve positions and pump speeds under varied operating conditions. The resulting data trained a neural network that learned how each configuration affected the battery systems and the cabin. It could predict future temperatures and, more importantly, compute the control settings needed to hit a target.

This invertible approach made the network an adaptive control layer. During a full cycle, the system adjusted parameters in real time to avoid under- or over-temperature events. When battery temperature fell below range, the controller identified the precise valve and pump changes needed to recover. It could also ease compressor load when limits were stable.

Together, the simulation framework and control layer created a self-correcting thermal system that improved speed and accuracy.

The Results

The framework delivered immediate gains. Engineers moved from multi-hour runs to seconds-scale iteration, testing new circuit layouts and control strategies that had been impossible to study before.

The adaptive control layer turned static calibration into a data-driven process. Instead of tuning modes by hand, the system maintained target ranges automatically during validation, adjusting pump speeds and valve positions to keep all components within their limits.

The libraries are now used beyond the initial program. The battery group is modeling internal heat generation, and the controls team has integrated the thermal model through FMI for co-simulation. Working from the same computable foundation, teams report faster decisions and more consistent results.

Building a Scalable Modeling Standard

By replacing a slow, siloed workflow with a unified computational framework, Wolfram Consulting turned thermal modeling from a bottleneck into a continuous design process. What began as a single EV program has become a shared modeling standard across engineering groups and now extends to electrical and control work.

When you need to speed up simulations or optimize system performance, Wolfram Consulting can help you build models that deliver faster, more reliable results.

Solving the Data Bottleneck: LabV’s Six-Week Path to Real-Time Insight and Quality Control

Client Results (12)

Labs generate more data than they can easily use. The result? Manual workflows and fragmented tools slow down decisions. LabV, a lab data management company, decided to design a system that makes it faster to identify issues and communicate them clearly—both internally and externally.

LabV worked with Wolfram Consulting to build a digital assistant for real-time lab data analysis. A single prompt now surfaces trends that once took hours to uncover. In one case, it revealed a correlation between density and heat resistance—guiding product decisions without manual analysis or coding. This made LabV one of the first AI-powered solutions for material testing labs, combining AI’s fluency with a computational foundation so every output is both fast and verifiable.

The Prerequisites for Smarter Analysis

Meeting regulatory standards requires collecting and analyzing hundreds of data points across batches and suppliers. But LabV’s instruments weren’t fully connected to its laboratory information management system (LIMS), and much of the data still lived in spreadsheets. Even routine retrieval was slow. Deeper comparisons—like tracking material behavior by supplier—were often skipped entirely.

While generative AI tools were readily available, they couldn’t access LabV’s proprietary datasets or guarantee accurate answers. Without a way to ground responses in the lab’s own data, AI risked producing results that looked plausible but couldn’t be trusted. Research shows that disorganized, siloed data delays batch approvals, extends development cycles and drives up costs by consuming engineering hours in redundant work.

More fundamentally, the fragmented system made automation impossible. Traditional LIMS platforms weren’t designed for complex data integration or analysis at scale. To move forward, LabV had to build a centralized, structured dataset—one capable of supporting real-time decisions and enabling smarter tools. Without that foundation, AI was just out of reach.

Making Data Usable at Scale

LabV couldn’t move forward until it fixed its data layer. Traditional LIMS systems aren’t built to connect every instrument or consolidate outputs. Wolfram helped create a unified framework where test results could be collected, structured and searched—laying the groundwork for automation and eventual AI-powered analysis.

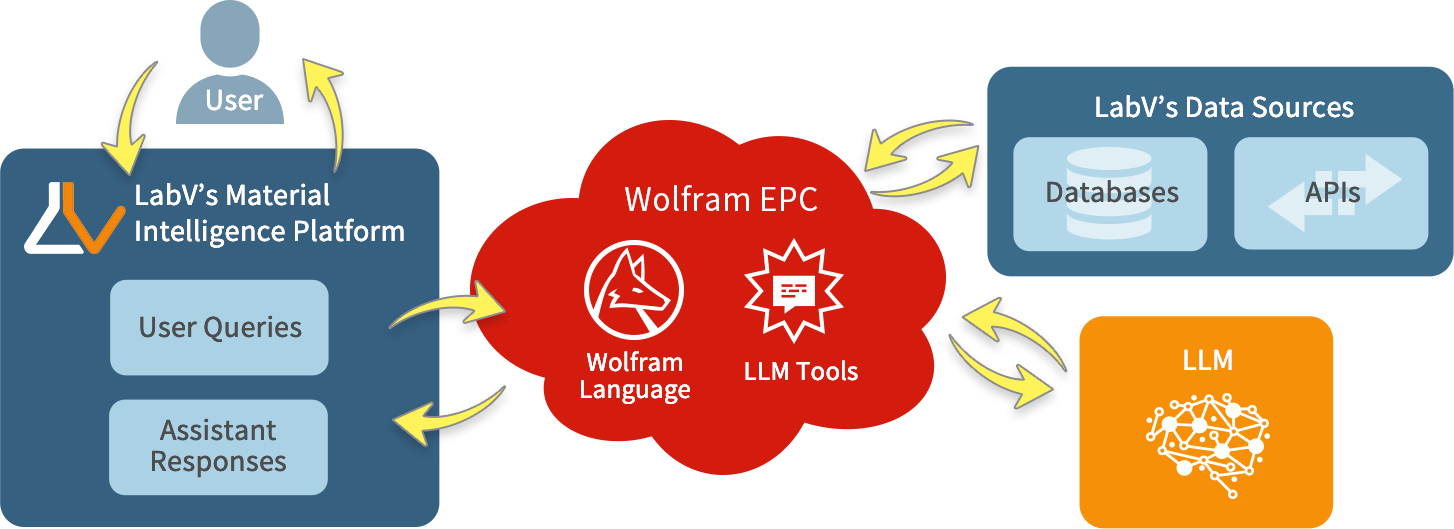

Once the data was structured, LabV and Wolfram Consulting built a digital assistant to work on top of it. The interface relies on a large language model to process natural language queries, while Wolfram’s back end handles the actual analysis—ensuring accuracy and preventing AI hallucinations. The assistant’s chat interface connects through Wolfram Enterprise Private Cloud, which coordinates LabV’s structured datasets, Wolfram’s computation results and the language model output. This architecture ensures every response comes from verified data and is computed with the same algorithms used in scientific and engineering applications.

The assistant isn’t built for show—it’s built to be used. LabV’s approach reflects best-practice machine learning workflows: data generation, preparation, model training, deployment and maintenance—keeping predictive insights accurate, explainable and current. Powered by Wolfram’s tech stack, a single prompt can return correlation tables, batch-level comparisons or supplier performance charts in seconds, using both historical and current data.

Real Results, Not Just Output

LabV replaced fragmented workflows with prompt-driven analysis powered by Wolfram’s back end. Batch issues that once went unnoticed were flagged in seconds. One manufacturer reported a 10% reduction in engineering hours by running fewer tests without sacrificing quality—time they could redirect toward faster development.

In coatings R&D, the assistant has identified optimal formulations for extreme environmental conditions by combining historical data with defined requirements, cutting development cycles and improving resource efficiency. Visual outputs improved supplier communication, and customized data handling reduced errors—helping the lab meet standards without extra head count or production delays.

Plus, the project’s six-week delivery window showed the team could move quickly without cutting corners. That speed, paired with Wolfram’s technical foundation, helped validate LabV’s approach and reinforced its credibility as a scalable platform for lab data oversight.

From Complexity to a Competitive Edge

LabV’s results were made possible by a system built to handle complexity from the start. Wolfram’s tech stack supports legacy data, real-time queries and evolving compliance needs. For leaders, that kind of scalability isn’t theoretical—it’s what makes automation sustainable under real-world demands.

Wolfram’s hybrid approach—combining a language model front end with a computational back end—delivers usable results without guesswork. Prompts return verifiable outputs, not vague summaries. Teams can surface patterns, outliers or points of interest in moments, interpret their significance and rapidly iterate through potential solutions—giving LabV clients an agility advantage in R&D and quality control.

By embedding a computational layer between the language model and the underlying data, Wolfram Consulting ensures AI output isn’t just plausible—it’s correct, explainable and backed by traceable sources. Since machine learning models return probabilities rather than certainties, having verifiable computation in the loop means every result can be trusted and acted upon. That’s why it’s not just about adding AI—it’s about making your data work for you.

When you’re ready to move beyond fragmented data, Wolfram Consulting can help you build the system that makes better decisions possible.